"More and more of our fellow citizens are going to go to their AI agents and ask: 'Who should I vote for?'" the President of the Republic warned last November during the Congress of Mayors of France. The stakes are immense, as it is impossible to evaluate how these conversational agents, through their responses, will influence citizens' votes—starting with the municipal elections in March 2026. On what basis will generative AIs produce these answers? Will they be pragmatic or utopian? And what authority will have fed their categories?

AI is settling into our homes, into the sphere of the intimate. We are surrendering to it the direction of our opinions, the final word in the debate. What kind of intelligence are we attributing to it that we would delegate the citizen's vote to it, or hand over the analysis of our romantic situations? Can it truly live up to the stakes we are entrusting to it?

From the art of conversation to the question of intelligence

It is the conversational nature of the tool that leads us to consider it our equal. If I ask a question and you understand me, then I recognize you as an interlocutor. Sometimes, I invalidate the answers; I look for the discussions where the AI is most performant but, in conversation, the technical nature of the tool fades away, as does my distrust. I end up assuming that I am indeed interacting with an "intelligence." We represent generative AI as an alter-ego only because it presents itself to us as such.

Once this representation is established, all that remains is for it to shine. This "intelligence," which strangely resembles me, quickly manifests capabilities that surpass my own. It has much more knowledge than I do, manipulates it faster, and can answer all my questions. Its intelligence seems to continue where mine ends.

Knowledge without a compass: the model's flaws

The philosopher Jean-Marie Schaeffer suggests tempering the awe that AI can generate in us. It is not the software that created the knowledge it exploits, but rather human specialists in the relevant epistemic field. The software formats this knowledge to offer a response, but the algorithms have no way of sorting the texts they vacuum up from the web; they classify them according to popularity, without attributing different weight to scientific and non-scientific sources, or specialist and generalist ones.

Even more concerning: having exhausted human-produced content for training, models now lack "fresh blood." The risk? That they end up training on their own productions. This vicious circle, called Model Collapse, acts exactly like a photocopy of a photocopy: the result becomes increasingly corrupted. By feeding on this artificial material, models amplify their own flaws and eventually produce nothing but a distorted caricature of humanity.

Fake it until you make it

If the narrative of AI converging with human intelligence leads us astray, it is primarily to better sell us on AI. We are our own unit of measurement, the master standard. The idea that a technological object is approaching the human level confers immense value upon it.

The best example of this is the euphemization of AI regarding the answers provided—the famous phrase: "XXXGPT can make mistakes. It is recommended to verify important information." This attributes an "accidental" character to errors that are actually structural, and which we have little prospect of resolving in the medium term. It is worth remembering that the term "Artificial Intelligence" itself originated with a few MIT pioneers seeking more powerful non-computational models and arguments to justify fundraising.

The pitch has changed little. A segment of product presentations and interviews is always devoted to the imminence of an AI soon capable of equaling humans: Artificial General Intelligence (AGI). To be achieved, warns François Chollet (deep learning specialist and one of the creators of ARC-AGI, the benchmark measuring AI progress), algorithms will need to be capable of "handling entirely new tasks that share only abstract similarities with previously encountered situations," which seems contradictory to existing training principles. But the narrative persists.

ChatGPT itself sees itself as more impressive than cognitive scientists do. When asked to describe its own "intelligence," it explains that it "excels at recognizing patterns," can process a volume of data at an "inhuman speed," and is a "mirror of human intelligence." But for specialists like Daniel Andler, AI does not understand the deep meaning of the information it manipulates. It cannot learn from subjective experience or create with intention. Its "creativity" is statistical: it recombines what exists, without intuition, discernment, or inspiration.

The comparison between artificial intelligence and human intelligence is a marketing argument that works against itself. Firstly, because it disappoints us when, after a dozen messages, the chatbot starts going in circles, contradicts itself, and forgets what it previously stated. But above all, because it prevents us from seeing the real capabilities of generative AI, which in other situations exceeds the expectations of its programmers. It is perhaps there that we should look for the marvel.

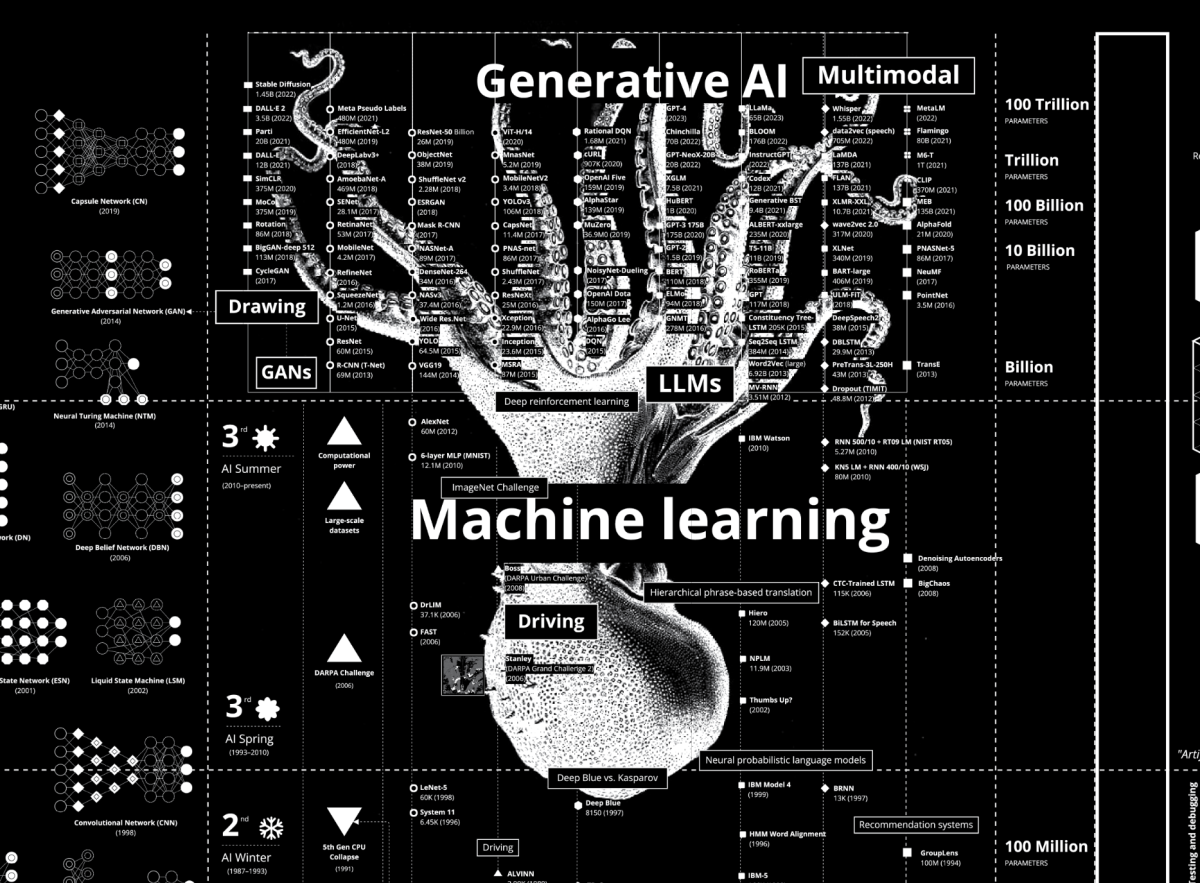

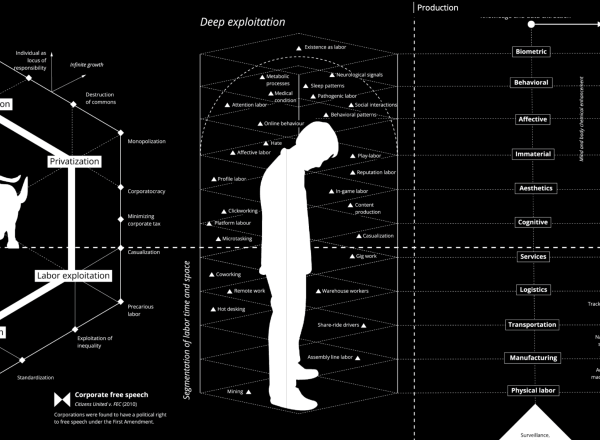

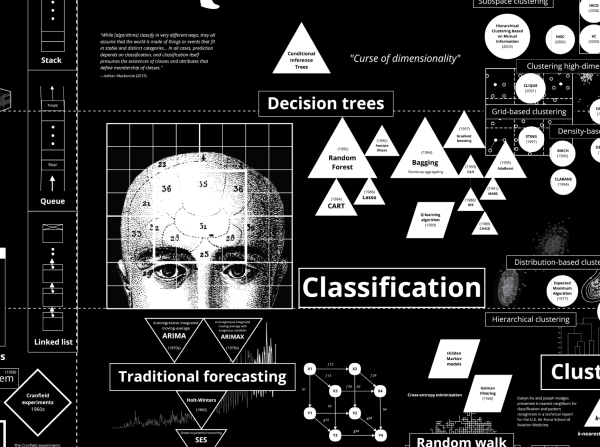

Illustration :Calculating Empires: A Genealogy of Technology and Power Since 1500 By Kate Crawford and Vladan Joler (2023)