Leaders are constantly required to make decisions. Each of them, even the most trivial, has repercussions, altering both collective and individual trajectories. Since the act of deciding is so crucial, it is incumbent upon the decision-maker to make the best possible choice. We aspire to decide well in all circumstances: at the office, at home, as a team, or alone. But what exactly is a good decision?

For us, a good decision is first and foremost rational: informed, coherent, objective, and if possible, formalized and quantified. It maximizes the objective assigned to it, processes as much information as possible, and takes the constraints of the situation into account. This rationality reassures us of our own relevance (that we were fair and on point), but it also guarantees the acceptability of our decision by others. Rationality is our common language: the more rational a decision is, the more sense it makes to others, and the less rejection it provokes.

The perfect decision

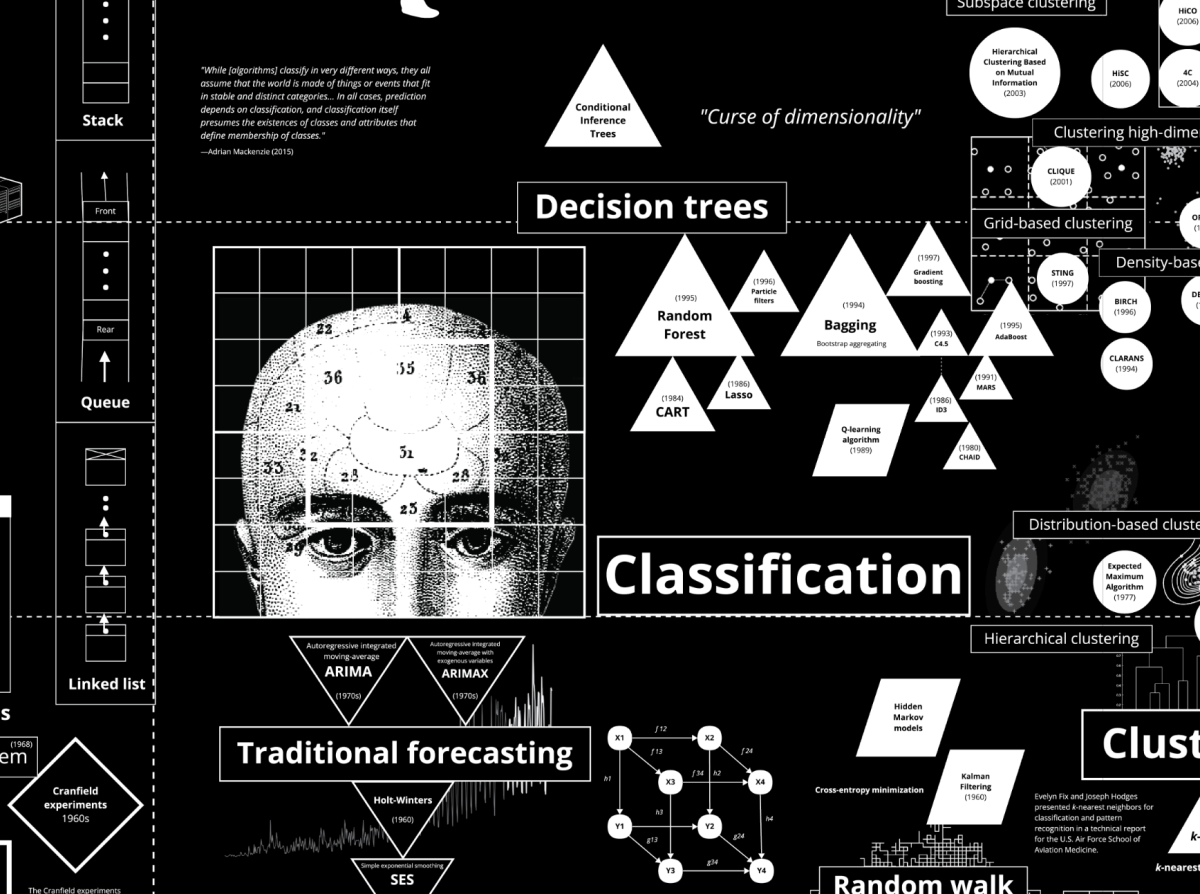

Every good decision is preceded by a phase of rigorous analysis focusing on two types of data: tangible data, relating to the past and present (pertaining to statistical analysis), and prospective data, concerning an uncertain and yet-to-be-realized future (pertaining to probability analysis). It is not surprising that decision sciences, grouped within decision theory, very early on resorted to mathematics to build the rational language of choices.

"One of the objects of decision theory is to provide the means to construct quantified descriptions of problems, as well as criteria, which allow solutions to be brought to them. The description of decision problems uses mathematical language because it is a universal language, on the one hand, and, on the other hand, because it allows for the use of powerful analytical tools" (Robert Kast, Decision Theory, 2002).

The analysis phase must therefore embrace the largest possible amount of data by making the "right" inferences. Herein lies the legitimacy of the decision: it is proportional to the quantity of data analyzed "correctly" beforehand. In this regard, AI represents a major opportunity. Where our cognitive abilities reach their limits, it seems to know none. Its functioning—algorithmic and utilitarian—thus opens the way to perfectly logical and objective decisions, or at least decisions qualitatively superior to those the human mind could produce.

Rooting decisions in the real world

Reality proves to be more nuanced. Despite its power, the system exhibits numerous technical flaws: hallucinations, data poisoning, or unexplained malfunctions capable of distorting its analyses. But above all—and this may seem paradoxical—a decision that is perfectly logical in terms of inferences is not necessarily a good decision.

"The algorithms that move from one state to the next—that is, which perform an inference—must therefore respect logical norms: they must only perform 'good' inferences, namely those that preserve truth. But this is not enough to achieve the set goal" (Andler, 2022).

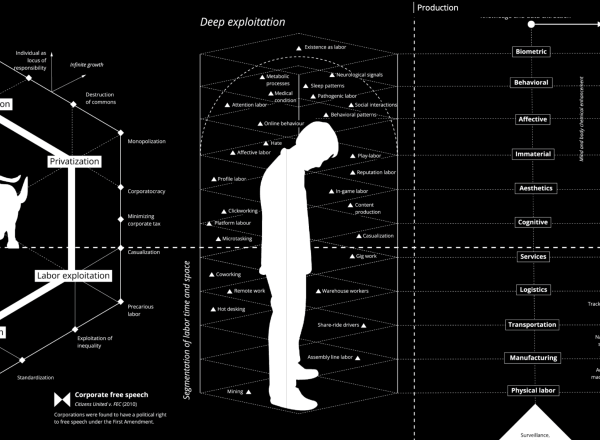

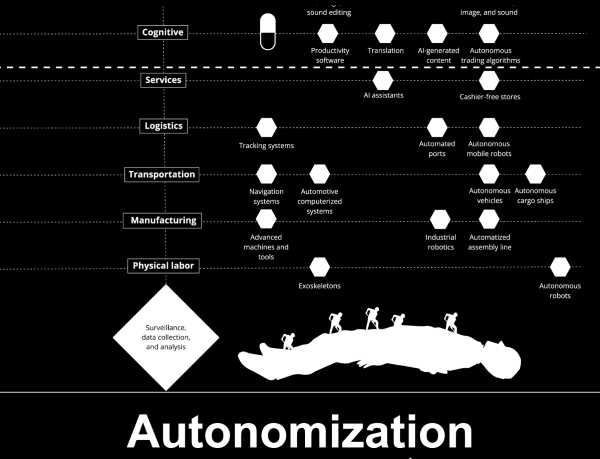

Indeed, the quantitative accumulation of good inferences is not enough to reach a qualitatively complex objective. In other words, AI excels in situations where the goal to be achieved is relatively simple. For example, based on financial data, AI can propose cost optimizations if its sole mission is to maximize efficiency. However, the situation becomes more complex when the objective integrates the subtler dimensions of the real world. AI will be of limited use if the formal goal of cost optimization must be reconciled with a multitude of other goals, more or less formal and tangible, such as strengthening the employer brand, employee engagement, or ethical positions in favor of job retention.

One step ahead of the machine

AI is a formidable tool for formal data analysis, but it seems devoid of political intelligence. It fails, for example, to grasp a team's atmosphere, our sources of anxiety and joy, our sensitivities, our needs, or our moral boundaries—all of which are data points we sense more or less consciously. As military leader Antoine Naulet reminds us: "AI tears the veil of uncertainty. But it does not lift it" (Naulet, 2019).

Using AI well therefore implies elucidating our individual and collective goals and intentions to cultivate the critical capacity needed to judge its results. This reflection on our culture will allow us to define what we want and what is essential to us, in order to stay one step ahead of the machine: "Culture is the depth that allows for the ethical understanding that guarantees the long term; AI can optimize our gains while destroying the planet; only humans can and must have a view beyond their objectives" (Naulet, 2019).

Let us not yield to the sirens of AI’s rationality. It misses all our seemingly irrational decisions that turn out to be good for us, for others, and for society. We sometimes decide to lose today without knowing what we will gain tomorrow because we know our world is infinitely complex—because it is living and unpredictable, and no machine can "calculate" it. In those moments, it is the political community and the very principle of trust that governs it that take over. In times of uncertainty, in addition to AI, perhaps we must also arm ourselves with trust to make the right decisions?

Illustration :Calculating Empires: A Genealogy of Technology and Power Since 1500 By Kate Crawford and Vladan Joler (2023)