AI has established itself as the dominant subject in the business world and beyond. While some see it as a useful tool for drafting emails or a form of entertainment to generate their portrait in the style of Miyazaki, within companies, the use and application of AI are strategic. 88% of companies report using AI regularly in at least one function (Singla et al. 2025), even if the majority of them are still in experimentation and pilot phases (Singla et al. 2025). But while a third of companies have begun deploying their AI programs at scale (Singla et al. 2025) and often communicate extensively on their AI integration, the reality is that the use of AI at scale—that is, across the entire organization and in multiple functions—is far from being a reality.

The weight of culture in AI deployment

AI is still at the stage of potential: it has the possibility of bringing about transformations on a small or large scale, points out Professor Aaron J. Barnes, a specialist in research on intercultural consumer behavior. But its real impact, contrary to all our wishes, is not yet very clear.

One would expect its large-scale adoption to increase as the technology progresses and becomes more efficient at executing tasks. Strangely, research suggests this is not the case. Not only is AI adoption uneven (varying by geographic region), but so is its acceptance. Barnes highlights the disparity between "Western" and "Eastern" countries, with the former perceiving an advantage in specific AI applications and the latter viewing AI as an overall benefit to society (Barnes et al. 2024).

Barnes postulates that this division is linked to differences in cultural values between countries. The difference between individualistic and collectivistic values, and the strength with which they are expressed in a given country's cultural context, can influence AI adoption, for example.

The diversity of cultural perceptions of AI

While it may seem perfectly rational to "adopt AI," cultural beliefs and values play a crucial role in defining the certainties we assume are shared: "rationality," or even "success." "Perhaps the most relevant element that differs culturally in its meaning is the definition of the self" (Barnes et al. 2024). This is all the more pertinent as technology is increasingly used as a mediation tool in the relationships between the "self" and others. The boundaries of these relationships are defined by and vary according to a specific cultural context, which means that AI can be seen as an extension of the self or as external to the self.

Barnes shows that when AI is seen as external to the self but not more powerful, it can be perceived as a threat, particularly in cultural contexts with individualistic tendencies. For example, in Western contexts, resistance arises against automation in fields that touch upon identity (such as creative or artisanal activities) out of fear of losing control or the meaning of one's actions. Conversely, when AI is considered both external and more powerful than oneself, it is often better accepted; factors such as religiosity can strengthen trust in AI. When AI is viewed as an extension of the self, it may be better accepted because we attribute familiar characteristics—those of human intelligence—to the AI. Cultures where the predominant belief is that intelligence increases gradually, such as East and South Asian cultures, may more easily trust AI when its learning capacity is emphasized.

Consequently, the integration of AI in companies is more of a cultural challenge than a technological one. Differences in representations across locations, professions, and ages affect the perception of AI and its adoption. So, how can we facilitate this adoption and, above all, its successful implementation within companies?

The challenge of integration in the company

The MIT report providing an overview of AI in companies in 2025 highlights a “generative AI divide.” It suggests that the large-scale deployment of AI in companies has a low return on investment, measured by its impact on the P&L (Aditya Challapally et al. 2025). It concludes that most enterprise AI (meaning internal AI) fails to reach the production and implementation phase (a conclusion disputed by other experts).

Naturally, real-world practices trump official processes, and most employees report regularly and informally using generative AI via personal subscriptions for their individual tasks, while struggling to adopt their company’s internal generative AI. They complain that these internal AIs lack memory and learning capabilities, but above all, that they are ironically disconnected from existing workflows and processes.

Co-constructing to progress

The adoption problem is therefore not where we think it is, and it is more complex. The "generative AI divide" is not the inability of employees to use AI in their daily routines, but rather the inability of companies to weave their internal AI into those daily practices. This classic mismatch in IT program deployment leads employees to use "their" LLMs informally because they feel the need for these flexible and responsive tools—but in doing so, they expose themselves to security flaws and information leaks.

There is, therefore, a paradox that offers companies an opportunity to understand the needs and the strategic application of generative AI in their business, rather than blindly joining the AI race. IT departments and those responsible for implementing enterprise AI must first attempt to understand very precisely the AI needs of employees and business functions, which are likely to be highly diverse within the organization and specific to each role.

This requires opening a long-term dialogue between all functions and departments, actively involving different stakeholders, and engaging in the co-construction of generative AI tools so that they are successfully implemented and adopted. This paves the way for the creation of collective generative AI solutions that are context-adapted and, therefore, as close as possible to individual needs, enabling the fulfillment of the company's strategy.

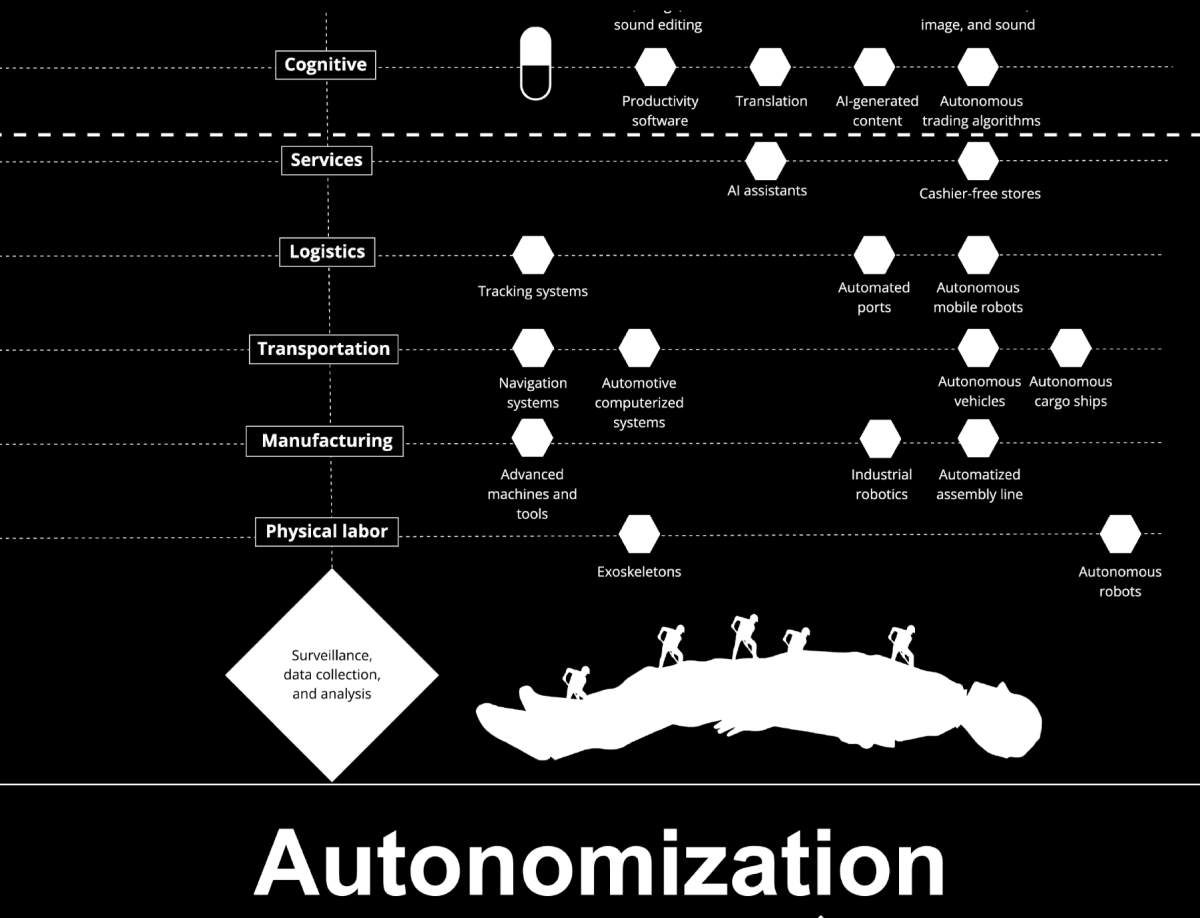

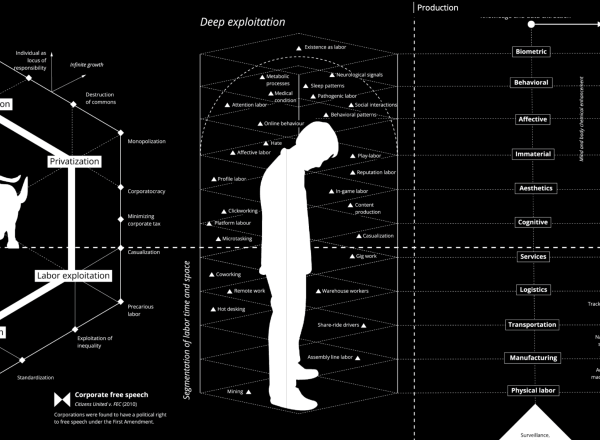

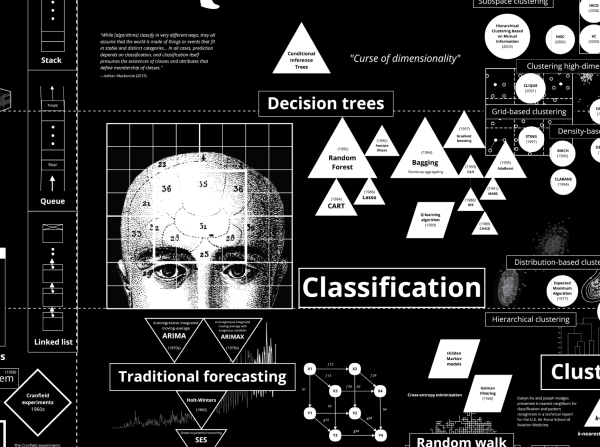

Illustration :Calculating Empires: A Genealogy of Technology and Power Since 1500 By Kate Crawford and Vladan Joler (2023)